Haiguang Information (海光信息) — Ying Zhiwei (应志伟): "Software Defenses Can Be Bypassed; the Foundation of AI Security Is Untamperable Hardware"

Warning from Haiguang: software patches are not enough

It has been reported that Ying Zhiwei (应志伟) of Haiguang Information (海光信息) warned that growing stacks of software defenses around large language models are ultimately fragile and can be bypassed, and that true AI security must rest on untamperable hardware. The claim lands amid a flurry of Chinese and international work aimed at fixing LLM limitations — especially short, brittle memory — with engineering band‑aids: external memory layers, compression tricks, and retrieval systems. But are those fixes durable when adversaries and sophisticated attackers are considered?

Memory "patches" proliferate, but they are not one solution

Chinese and global labs have produced many memory-layer innovations — Claude‑Mem (reportedly 50,000+ GitHub stars), Mem0, Letta (formerly MemGPT), LongLLMLingua, Acon, and others — that use compression, external databases, or layered virtual‑memory concepts to make models remember more across sessions. These approaches trade off capacity, latency and model portability: compression is cheap but limited; external memories scale but add system complexity; soft‑prompt encodings yield extreme compression but only work for specific, long‑lived models. It has been reported that some systems show big benchmark gains — lower token use, faster responses, higher multi‑hop scores — yet industry insiders describe them as pragmatic stopgaps rather than cures.

Architecture and geopolitics push toward hardware roots

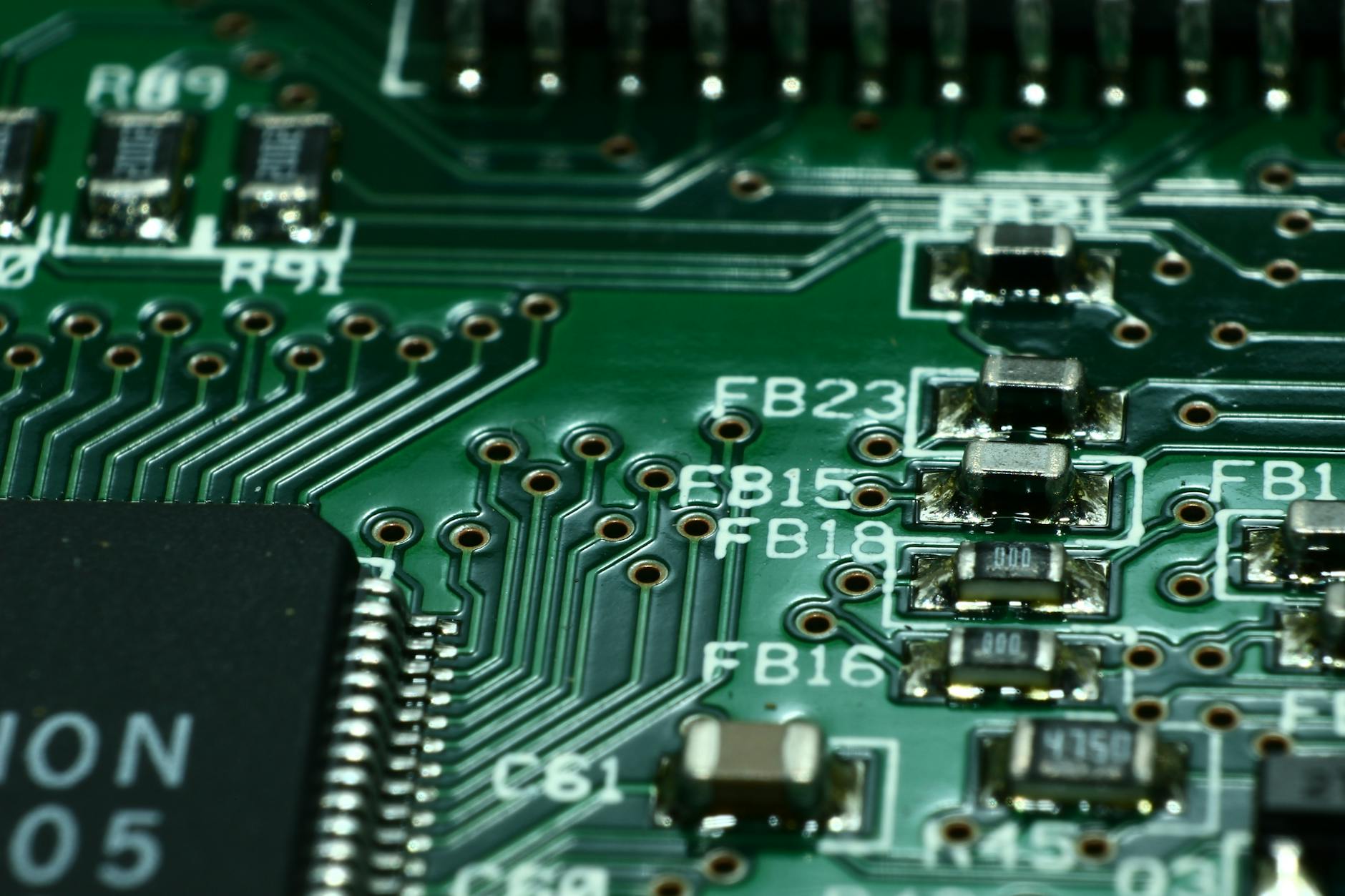

Researchers also argue the root problem is architectural: Transformer attention scales quadratically with context length, so expanding windows quickly becomes computationally infeasible. Work such as DeepSeek's sparse‑attention efforts and Qwen3‑Next attempts to rethink attention are important. But Ying’s point widens the frame: software and model redesigns are still software. Hardware guarantees — tamper‑resistant chips, trusted execution environments and attestation — underpin claims about provenance, integrity and in‑field security. With U.S. export controls and broader supply‑chain restrictions shaping access to advanced semiconductors, it has been reported that China is accelerating domestic hardware initiatives to pair new AI models with trusted silicon.

Can a software stack ever be made as trustworthy as a sealed hardware root of trust? Ying says no. As companies stitch memory fixes on top of models, the strategic debate shifts: invest more in clever software, or build a hardware foundation that attackers cannot rewrite? The answer will shape who can safely deploy large‑scale AI in contested and regulated environments.