China hackathon shows robots can be tuned fast — but “general” embodied AI remains far off

Fast demos, fragile reality

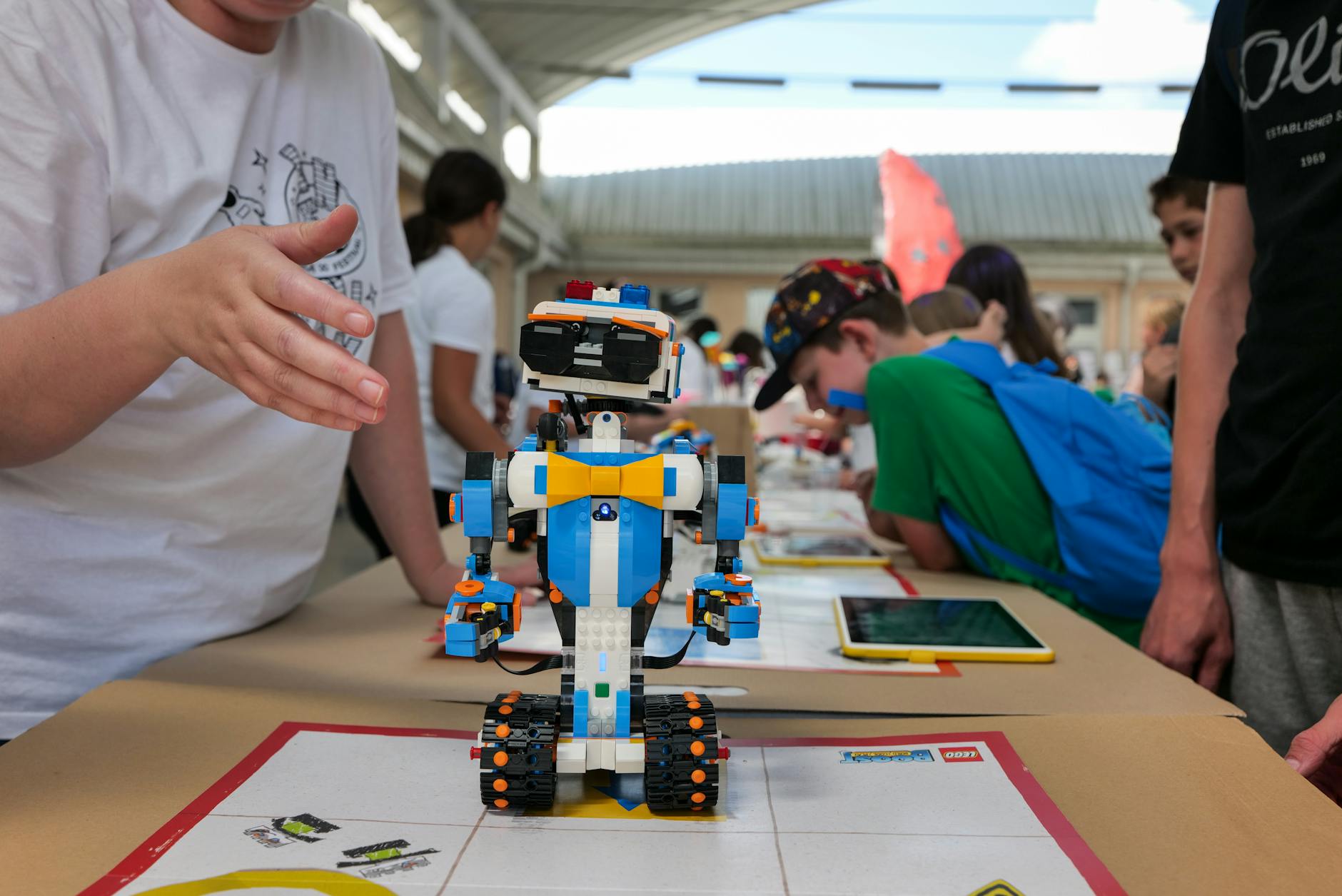

Ifeng Tech reported that a three-day robotics hackathon organized by Zibianliang (自变量) produced a flurry of impressive demos — and an important cautionary lesson. Teams, many made up of students born after 2000, used a shared dataset, on-site data-collection rigs and generous compute to get robots performing pick-and-place and other routine tasks in days. The result looked promising. But were these fast wins meaningful progress toward truly general embodied intelligence? The answer, according to competitors and organizers, is complicated.

A versus B: why hidden tests matter

The event split work into an open A leaderboard and a hidden B leaderboard. A tasks had fixed objects and locations; teams could fine-tune relentlessly and some reports said A-task success rates climbed from 20–70% to nearly 100% within a day — classic overfitting. When the B tasks added new fruit types, distractors and changed layouts, many high-performing teams suddenly faltered. Nanjing University of Posts and Telecommunications (南京邮电大学) contestant Yuan Haokuan told InfoQ that short, local retraining helped a little but the models lacked the data diversity to generalize. The lesson was clear: short-cycle engineering can reproduce demos, but it does not equal robustness.

Base models, benchmarks and broader pressures

Zibianliang’s algorithm partner Gan Ruyi (甘如饴) and CTO Wang Hao (王昊) stressed that stronger base models — not engineering patches for each vertical — will ultimately separate leaders from followers. In parallel, more Chinese groups are launching real-robot benchmark suites — Yuanli Lingji (原力灵机)’s RoboChallenge, Zhiyuan (智元)’s AgiBot World Challenge and Zibianliang’s ManipArena — to force models out of demo mode into repeated, multi-task, constrained evaluation. It has been reported that access to top-end accelerators is increasingly constrained by export controls and trade policy, a factor that may nudge Chinese teams toward data quality and base-model design rather than purely scale-based approaches.

So what now?

For Western readers used to flashy demo videos, the hackathon is a reminder: speed of adaptation is improving — but so is the ability to manufacture illusions of capability. Many evaluation systems still hide model ownership or isolate interfaces to protect IP, which reduces transparency. If the sector wants real progress, it will need clearer, repeatable real-world benchmarks that distinguish task-specific tuning from genuine generalization. Otherwise, will the industry be guided by demonstrations — or by machines that truly hold up under changing, messy reality?