Crowdfunded Tiiny AI Pocket Lab Raises $1M in Five Hours — A Hit Because It Answers a Local-AI Gap

Fast crowdfunding, focused product

Tiiny AI (本智激活) shot to prominence on Kickstarter when its Pocket Lab external AI box reportedly raised more than $1 million within five hours of launch. The project — priced from $1,399 — has, it has been reported, attracted nearly $2.95 million and 2,093 backers at the time of publication. That kind of early traction on Kickstarter hasn’t been seen since Bambu Lab (拓竹)’s X1 campaign in 2022, a reminder that a compact, easily understood hardware story still sells quickly to Western backers and enthusiasts.

Why buyers are paying up

The pocket-sized pitch is simple: users want a local “Jarvis” — an always-on assistant that preserves privacy, avoids recurring cloud API costs, and doesn’t turn their main workstation into a model host. Tiiny AI’s device does one thing and one thing only: run local large language models (LLMs) up to about 100B parameters for on-premise inference. It does not replace a PC or Mac; think of it more like adding a dedicated external drive for AI compute. For professionals in finance, law and research, and for power users who already resent token bills or unstable agent uptime in the cloud, that proposition is persuasive.

The tech bet: PowerInfer, heterogenous compute, and the 100B sweet spot

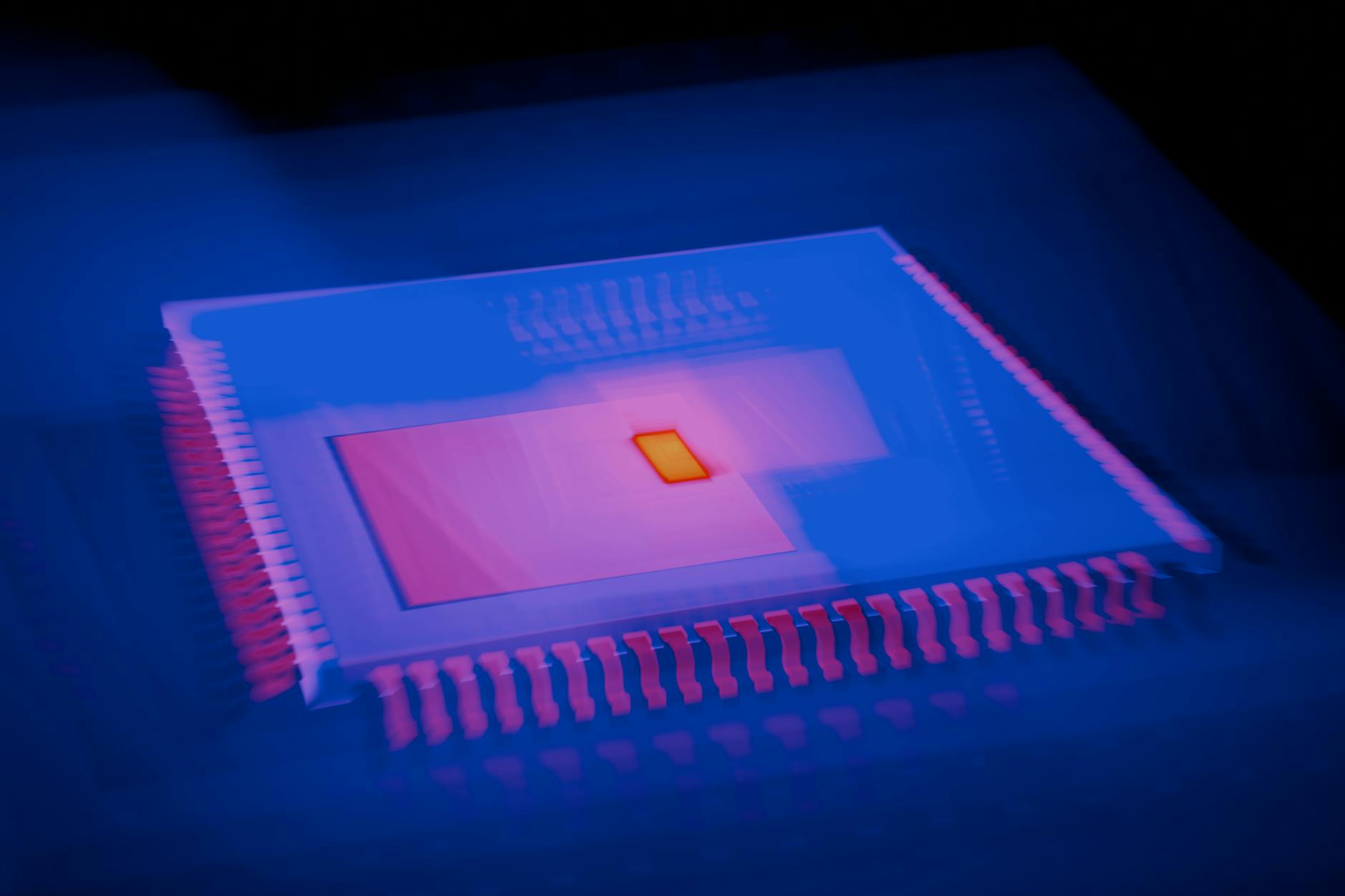

Tiiny AI traces its roots to a project spun out of Shanghai Jiao Tong University’s Parallel and Distributed Systems Lab (上海交通大学并行与分布式系统研究所, IPADS). The team’s open-source inference engine PowerInfer reportedly amassed about 9,100 GitHub stars in 2024 and forms the core of the Pocket Lab’s software strategy: smart scheduling across heterogeneous silicon rather than brute-force GPU stacking. The company says the device pairs an Arm-based SoC with a custom dNPU ASIC and that the system can one-click run many open models below 100B. It has been reported that the Kickstarter page lists a combined 190 TOPS figure and that internal tests show competitive token throughput for 120B- and 35B-class scenarios — claims the company markets as proof that software orchestration can offset hardware limits.

Open questions, critics and the geopolitical frame

Not everyone is convinced. It has been reported that some observers have questioned the marketing — noting the 120B model cited is an MoE variant that activates only a slice of parameters per token, and that TOPS figures from mixed compute blocks are not directly additive. Meanwhile, the wider momentum — the so-called “lobster” or open-source wave (OpenClaw, Ollama et al.) — plus Western export controls on advanced accelerators, has made local inference hardware commercially attractive and strategically relevant. So is Tiiny AI Pocket Lab an emergent new product category or a pragmatic stopgap while cloud and chip ecosystems evolve? Early buyers have voted with their wallets; long-term success will hinge on transparency, real-world performance, and how well the device integrates with rapidly changing model architectures and supply constraints.