ChartDiff offers the first large-scale benchmark for cross-chart reasoning — can models explain differences, not just read a single plot?

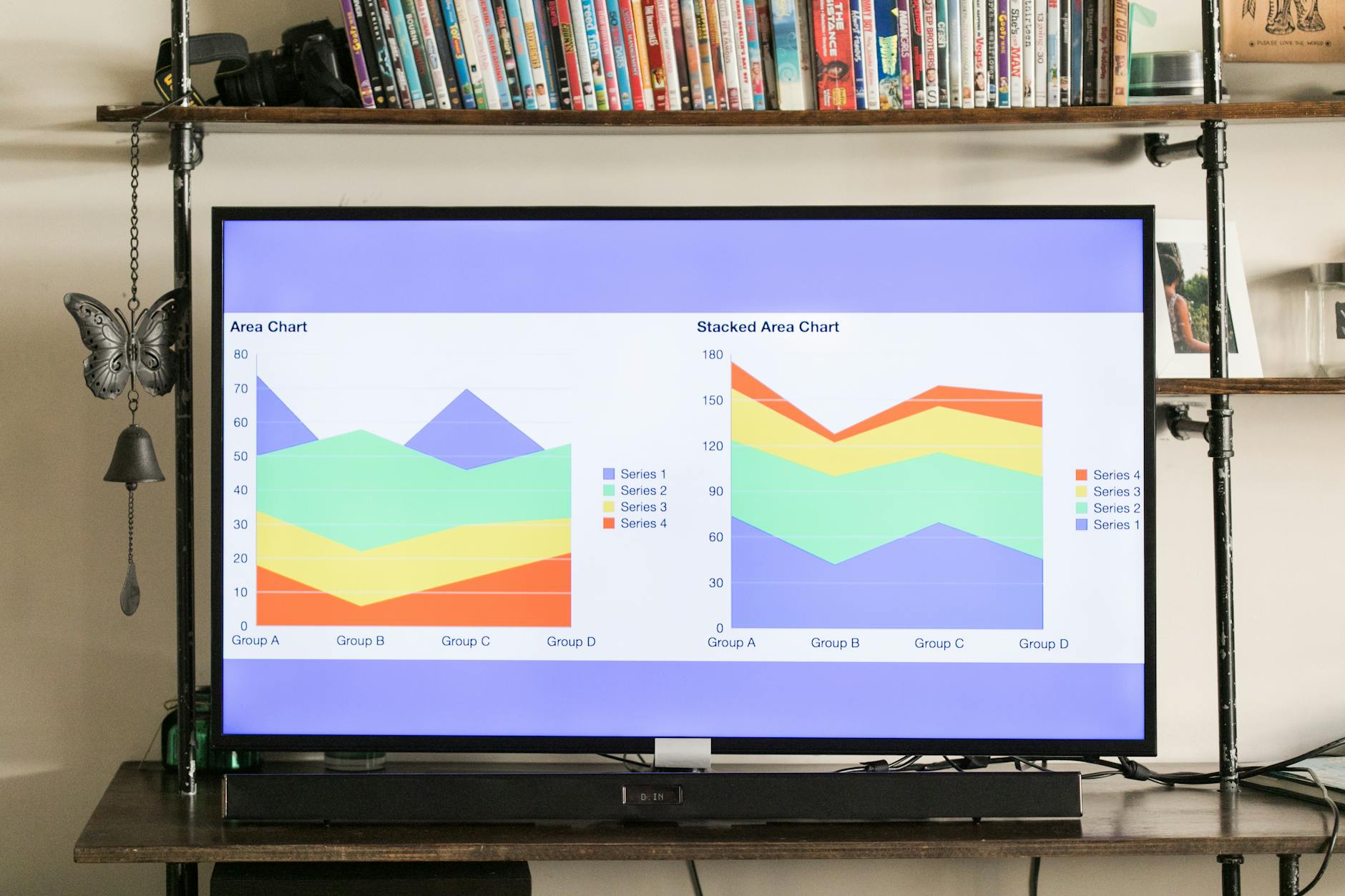

A new arXiv paper, ChartDiff (arXiv:2603.28902), introduces what its authors call the first large-scale benchmark focused specifically on comparative summarization of chart pairs. Charts are central to analytical workflows across science, business and journalism. Yet most existing benchmarks ask models to interpret single charts in isolation. ChartDiff aims to push models to answer a harder question: how do two charts differ, and what do those differences imply?

What ChartDiff contains and how it works

According to the arXiv posting, ChartDiff assembles a large corpus of aligned chart pairs annotated for cross-chart comparative summaries; it has been reported that the dataset contains "8,5..." examples in its current release, indicating thousands of annotated chart-pair instances. The benchmark frames tasks such as identifying relative trends, contrasting categories, and producing natural-language comparative summaries, and includes evaluation splits and reference annotations to measure fidelity and factuality. The authors say the benchmark is intended to support both multimodal vision-language models and specialized chart-understanding systems.

Early results and practical implications

It has been reported that state-of-the-art multimodal models that do reasonably well on single-chart questions struggle on ChartDiff’s comparison tasks, often missing nuanced relative claims or conflating unrelated axes. Why does this matter? Comparative reasoning is central to decision-making: spotting divergence in two time series or identifying a categorical shift across demographic groups are everyday analytical tasks, and automation here would augment — not replace — human analysts. ChartDiff gives researchers a concrete way to measure progress on that capability.

Openness, reproducibility and geopolitical context

The paper is hosted on arXiv and is presented through arXivLabs, which supports collaborative feature work under principles of openness and privacy. It has been reported that the authors plan to release code and annotations; reproducibility will depend on that release and on the broader research community’s access to compute resources. In a time when international trade policy, export controls and sanctions increasingly shape who can run large-scale models, open benchmarks that are dataset-light or that enable efficient evaluation may be especially valuable. Will ChartDiff shift attention from single-chart accuracy to comparative understanding? The field will find out as researchers test models against this new, targeted challenge.